In 2026, the most dangerous bugs aren’t the ones that crash your program—they are the ones that don’t. These “silent errors”—off-by-one errors, race conditions, logical leaks, and data drifts—can persist in production for months, quietly corrupting databases or skewing analytics. Traditional debugging is a reactive hunt for the “why” after a crash. AI-driven debugging has reinvented this process into a proactive, system-wide analysis that identifies the “what might happen” before a single line of code is executed.

The Shift from Stack Traces to System Context

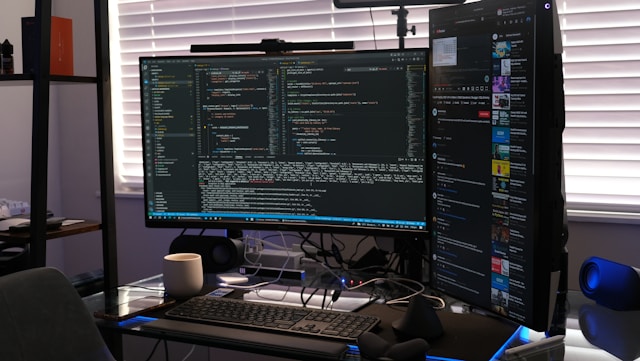

Historically, debugging began with a stack trace—a literal map of where the code failed. But silent errors often produce no trace. In 2026, tools like Cursor Bugbot and CodeRabbit have shifted the focus toward “Deep Context Analysis.”

Instead of looking at a single file, these AI agents index your entire repository and its dependency graph. They understand how a change in a shared utility library might silently break a validation check in a seemingly unrelated microservice. By combining Abstract Syntax Tree (AST) evaluation with generative reasoning, these tools catch the logical “spec slips” that traditional unit tests miss. CodeRabbit, for instance, now reports a 46% accuracy rate in detecting real-world runtime bugs—specifically targeting those subtle edge cases that human reviewers typically overlook.

Agentic Debugging: The Autonomous Investigator

The standout innovation of the past year is the “Agentic Debugger.” Unlike a static linter, an agent like Claude Code (powered by Opus 4.6) acts as an autonomous investigator.

-

The “Plan-Execute-Verify” Loop: When you report a strange behavior, the agent doesn’t just guess. It reads the relevant files, formulates a hypothesis, writes a targeted test case to “prove” the error exists, and then iterates on a fix.

-

Trace-Driven Debugging: Emerging frameworks like TraceCoder analyze execution logs and waveforms in real-time. If a function is returning a “correct” type but an “illogical” value, the AI traces the data flow backward through the execution history to find the exact point of semantic drift.

Predictive Debugging: Finding the Bug Before It Exists

We are entering the era of “Predictive Debugging,” where AI identifies “code smells” that correlate with future failures. Tools like DebuGPT and Safurai monitor your codebase in real-time, flagging patterns that historically lead to race conditions or memory leaks.

-

Anomaly Detection: In financial and high-stakes sectors, AI algorithms now scan transaction processing code to detect subtle anomalies that indicate fraud or logic errors.

-

Automated Root Cause Analysis (RCA): When a bug is found, AI-powered RCA can reduce resolution time by up to 45%. By sifting through mountains of telemetry and logs, the AI can point directly to the origin of an issue, explaining the complex interaction in plain language rather than requiring a developer to manually “bisect” the git history.

The Multi-Agent Validation Strategy

A significant challenge in 2026 is that AI can sometimes “fail silently” itself. To counter this, professional workflows have adopted Multi-Agent Validation.

-

The Executor: One AI agent writes or fixes the code.

-

The Validator: A second, independent agent reviews the execution, checking if the correct tools were used and if the output is consistent.

-

The Critic: A third agent provides the final “Approved” or “Rejected” status with explicit reasoning.

This structural separation of concerns ensures that a single AI’s “hallucination” or logical slip is caught by a peer agent before it reaches the main branch.

The End of the “Works on My Machine” Era

AI is effectively ending the “works on my machine” excuse by reconstructing failure scenarios from production environments. Using Coverage-Guided Test Generation, AI agents can look at “cold spots” in your code—areas that haven’t been triggered by current tests—and synthesize directed random tests to force those paths to execute. This ensures that the most obscure “black swan” errors are surfaced during the CI/CD pipeline rather than on a Friday night in production.

Conclusion: From Detective to Director

In 2026, the developer’s role in debugging has transitioned from being the primary detective to being the “Director of Investigation.” You no longer spend your hours setting breakpoints and inspecting variables; you spend them reviewing the “failing hypotheses” and “proposed patches” generated by your AI agents.

By leveraging predictive analysis, multi-agent validation, and autonomous RCA, we are building software that is not just more functional, but inherently more resilient. The silent error is no longer an invisible threat—it is a solvable problem, provided you have the right AI partner to help you see through the noise.

Have you noticed any recurring patterns in your own projects where silent errors tend to hide—perhaps in complex state management or third-party API integrations?