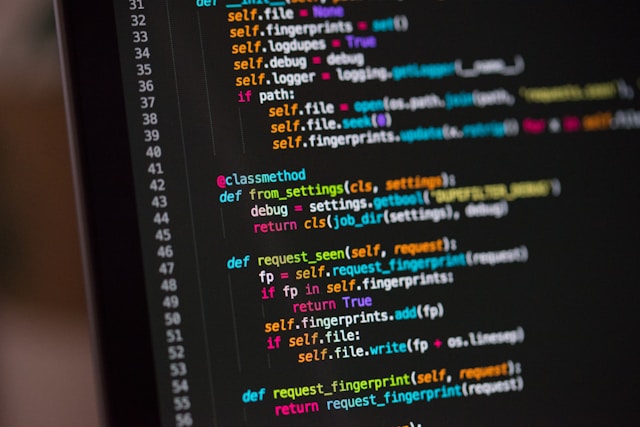

The software engineering landscape is undergoing its most profound transformation since the transition from punch cards to the integrated development environment. The introduction of artificial intelligence code assistants has radically compressed the time required to translate a conceptual idea into working source code. We have entered the co-pilot era, an environment where lines of boilerplate, complex regular expressions, and entire algorithmic structures can be generated by a machine in a fraction of a second based on a simple natural language prompt.

This technological leap promises an unprecedented surge in developer velocity. It eliminates the tedious, repetitive elements of coding, allowing engineers to bypass the mechanical friction of typing and syntax lookup.

However, this newfound speed introduces a subtle, insidious psychological trap. When a tool makes the generation of code completely frictionless, it alters the cognitive posture of the developer. It shifts your primary professional role from a deliberate creator to a passive observer.

If you allow the AI to dictate the logic, structure, and architecture of your applications without rigorous intervention, you enter a state of cognitive atrophy. Your critical thinking skills—the very foundation of elite engineering—begin to erode. To survive and thrive in this automated ecosystem, you must master the art of using an AI code assistant as an administrative accelerant while fiercely protecting your intellectual sovereignty.

The Mirage of the Perfect Suggestion

To use an AI code assistant safely, you must first understand the underlying mechanics of how these tools operate. An AI model does not comprehend computer science concepts, business logic, or system architecture in the way a human engineer does. It is, at its core, a highly advanced probabilistic sequence generator. It analyzes the context of your current file, your open tabs, and your repository, and then predicts the tokens most likely to follow based on the patterns it digested from millions of open-source repositories during its training phase.

This statistical architecture creates a powerful cognitive mirage: the illusion of correct logic. Because the code generated by an AI assistant is syntactically pristine, perfectly indented, and utilizes correct naming conventions, your brain automatically categorizes it as high-quality, trustworthy software. This cognitive bias is known as automation bias—the human tendency to favor suggestions from automated decision-making systems even when they are demonstrably incorrect.

In reality, AI assistants are highly prone to hallucinating non-existent API endpoints, introducing subtle security vulnerabilities, and missing deep logical edge cases unique to your business domain. If you accept suggestions mindlessly with a tap of the tab key, you are essentially copy-pasting code written by a statistical model that has never executed a program or debugged a system failure. You become a vehicle for the rapid accumulation of unverified code, building a fragile architecture that will inevitably break under production stress.

The Cognitive Interrogation Framework

Leveraging AI without losing your critical thinking requires the implementation of a strict cognitive interrogation framework. You must transition your mind from the role of a passive recipient to that of a senior code reviewer evaluating the work of an eager but error-prone junior developer. Every line of code generated by an AI assistant must be assumed guilty of a logical error until proven innocent through rigorous manual analysis.

When an AI assistant presents a code suggestion, do not look at it as a finished solution. Instead, freeze your fingers and subject the suggestion to three specific, critical questions before accepting it:

The Question of Explicit Intent

-

Do I thoroughly understand every character, parameter, and method invocation within this suggestion?

If an AI generates a dense bitwise operation, a complex database query, or a nested array manipulation that looks functional but confuses your immediate understanding, do not accept it. The moment you introduce code into a repository that you cannot explain line-by-line, you lose ownership of your codebase.

If that code fails at three o’clock in the morning on a production server, you will be utterly incapable of debugging it because you did not author the underlying mental model. Use the AI to explain the syntax if necessary, but never accept code that surpasses your current comprehension.

The Question of Edge-Case Resilience

-

Where is the hidden failure point in this generated logic?

AI models are trained heavily on the “happy paths” found in documentation and tutorials. Consequently, the code they generate is structurally optimized for ideal scenarios. It assumes the network will never drop, the user input will always match the expected data type, and the database record will always exist.

Before you accept a block of generated code, mentally subject it to hostile data. Ask yourself how the logic will behave if a null value is passed, if an array arrives empty, or if an external service returns a timeout error. You will frequently find that you need to wrap the AI’s clean syntax in robust, human-designed error handling and defensive checks.

The Question of Architectural Harmony

-

Does this specific implementation align with the broader patterns and constraints of my entire system?

An AI code assistant operates with a highly restricted contextual window. It sees the immediate file you are editing and a few surrounding files, but it lacks a holistic, big-picture understanding of your company’s infrastructure, scaling challenges, and long-term business goals.

The tool might suggest an elegant, isolated function that completely violates your team’s established design patterns, introduces a memory leak when scaled to millions of concurrent users, or creates data redundancy across your services. You must serve as the guardian of architectural consistency, overriding local optimizations to protect global system integrity.

Inverting the Workflow: The Intentional Design Protocol

The standard, destructive workflow in the co-pilot era looks like this: write a brief comment, let the AI generate twenty lines of code, read through it superficially, accept it, and move on. This workflow puts the machine in the driver’s seat of the creative process, forcing the human brain into a purely reactive state.

To preserve your critical thinking, you must invert this sequence through the Intentional Design Protocol. You must resolve the problem completely in the theater of your mind before you allow the AI tool to type a single character.

Before you invoke an AI prompt or write a comment meant to trigger a suggestion, step away from your development environment. Open a physical notebook or a digital whiteboard and map out the solution using first-principles thinking.

Define the input data structures, outline the step-by-step algorithmic transformation required, and sketch the system boundaries. Write down the explicit logic in plain English or pseudocode.

Once the conceptual blueprint is completely crystallized in your mind, open your code editor and use the AI code assistant purely as a high-speed typist. Guide the model with highly specific, granular prompts that reflect your pre-determined architecture.

If the AI suggests a path that diverges from your mental blueprint, reject it instantly and steer it back with more precise instructions. By ensuring that the creative, analytical spark originates exclusively within your own mind, you use the AI to accelerate execution speed without outsourcing your cognitive responsibility.

The Threat of Syntactical Dependence

A growing danger among developers who rely heavily on AI assistance from the early stages of their careers is the development of syntactical dependence. Because the AI is always available to close a bracket, import a library, or construct a loop, the brain stops converting short-term memory into long-term mental pathways. The developer becomes functionally paralyzed if the internet connection drops, the AI service experiences an outage, or they are forced to write code on a whiteboard during a technical evaluation.

To combat this dependency and keep your cognitive wiring sharp, implement regular intervals of deliberate analog coding. Dedicate specific sessions each week to writing code with your AI assistants entirely disabled.

Force yourself to navigate documentation manually, trace type definitions through your source code using your IDE’s native tools, and construct complex logic from memory. This practice acts as a form of resistance training for your brain. It ensures that your underlying mental models remain sharp, vibrant, and independent of external automated networks. You must remain a fully capable software engineer who chooses to use an AI tool, rather than a clerk who is helpless without it.

The Creative Asymmetry: What AI Cannot Replicate

As artificial intelligence models grow increasingly sophisticated, their capacity to produce syntax will approach absolute perfection. The cost of generating standard code will continue to drop toward zero. In this landscape, the value of a developer cannot be found in tasks that can be automated; it must be found in the creative asymmetry—the uniquely human cognitive capabilities that software models cannot replicate.

AI cannot understand human empathy. It cannot interview a frustrated user, look past their contradictory feature requests, and uncover the core psychological friction they are experiencing in their workflow.

AI cannot navigate organizational politics. It cannot align three distinct engineering teams with conflicting priorities behind a singular architectural vision.

AI cannot invent entirely new computing paradigms. It can only recombine existing historical data patterns found in its training sets.

Your true superpower in the co-pilot era is your capacity for abstract systems design, strategic trade-off analysis, and human-centric problem-solving. When you offload the low-level mechanics of syntax generation to an AI assistant, you should not use that saved time to simply produce more lines of code. Instead, reinvest that newly acquired cognitive bandwidth into high-level thinking.

Spend your hours studying deep architectural principles, analyzing data compliance, optimizing the user experience, and ensuring your systems are resilient against real-world chaos. Elevate your positioning from a mechanical coder to a high-level digital engineer.

Commander of the Architecture

The co-pilot era does not spell the end of the software engineer; it represents the birth of a vastly more powerful, high-leverage class of professionals. The technology tools available to us are magnificent servants, but they are catastrophic masters. If you treat your AI assistant as a replacement for your intellect, you surrender your autonomy and reduce your value to that of a machine operator.

But if you approach these tools with a posture of rigorous skepticism, intentional design, and cognitive vigilance, you unlock an extraordinary professional advantage. You transform the AI into a force multiplier that executes your explicit commands at lightning speed, freeing your mind to focus entirely on the grand architecture of your systems.

Tomorrow, when you sit down at your workstation, look at your AI code assistant with fresh, critical eyes. Demand absolute clarity from every suggestion, challenge every Happy Path assumption, and enforce structural alignment across your entire codebase. Claim full authorship over your logic, protect your critical thinking, and lead the technological revolution with an unshakeable, human mind.